April 13, 2026

LinkedInThe Early Adopter Tax

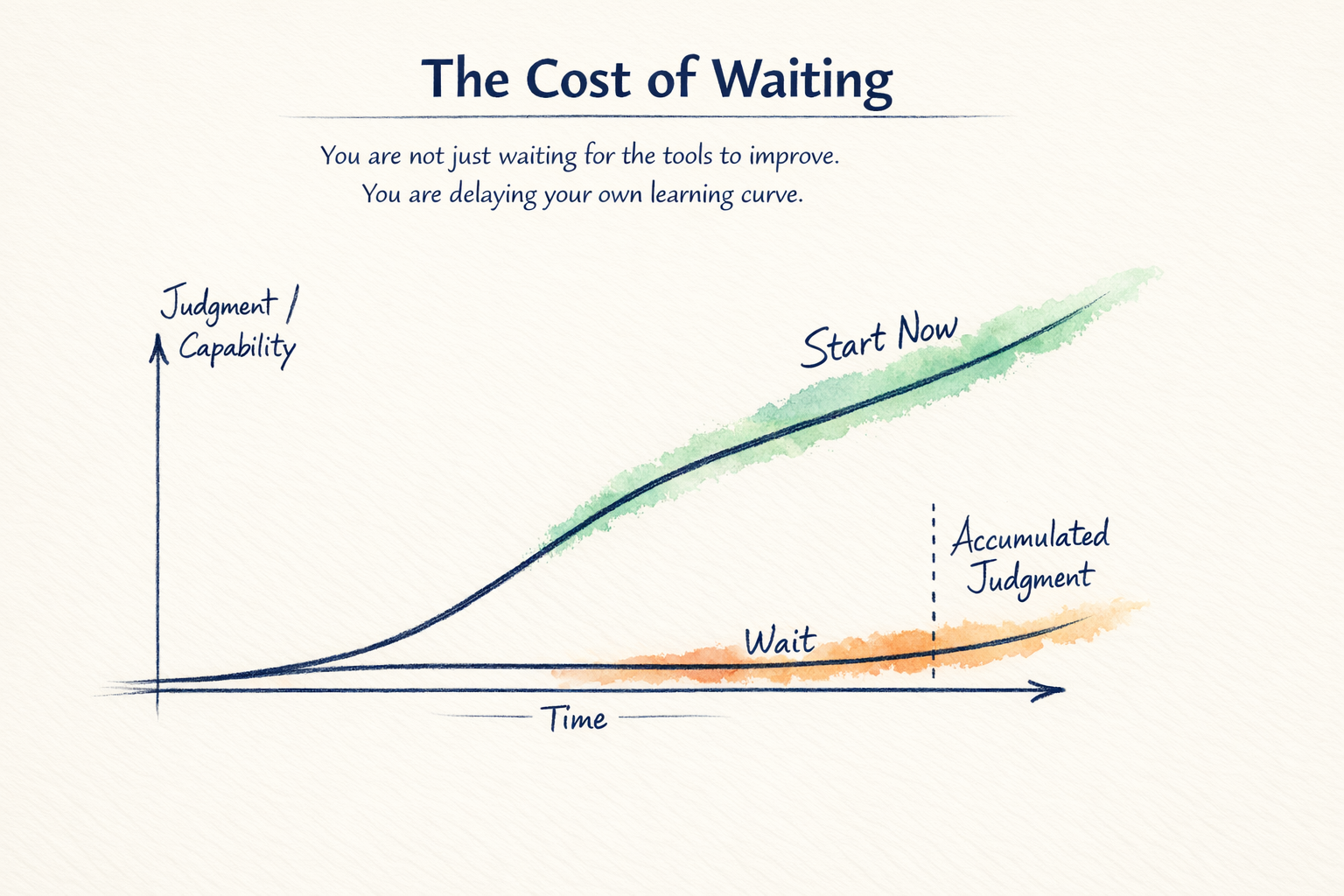

You can wait for better tools. Or you can start building the judgment to use them.

Working closely with frontier LLM tools comes with a side effect: your expertise expires quickly.

The prompts that mattered a few months ago are no longer needed. The workaround you learned through trial and error becomes a built-in feature. The workflow that feels advanced starts to look like an early draft of the product itself.

That is the cost of being early. It is a cost I am willing to pay. Redundant learning is not wasted learning.

While the scaffolding will keep changing, the real asset is the judgment you build about where these systems fit, where they break, what good output looks like, how to evaluate quality, how to keep humans in the loop, how to redesign workflows, and how to govern risk.

A lot of what gets called prompt engineering is a temporary adaptation to current product limitations. As models improve, many of today's prompting tactics become less important. However, you are building judgment that survives model churn.

Some might argue that you are better off waiting. And sometimes waiting is rational. Costs are dropping. Interfaces are improving. Default capabilities absorb yesterday's hacks.

But waiting has a cost.

You are not just waiting for the tools to mature. You are also delaying your own learning curve. That is not a price I am willing to pay.

When I started building a simple Connect-K game with OpenAI Codex, the interaction model was much more manual. Progress came from carefully constructed prompts, step-by-step instructions, and constant steering. I would break the work into small pieces, specify constraints up front, call out edge cases, and guide the model through implementation details one layer at a time.

You learn quickly, but much of that learning is about compensating for the limitations of the tools.

A few months later, the same kind of project can be built much more efficiently. The models are more capable. You can describe the system at a higher level, give a clearer objective, and let the model generate more of the structure, handle more edge cases, and recover from more of its own mistakes. Less time spelling out every step. More time evaluating what comes back.

That change also affects how the work is organized. Before, progress depended on tightly managing one thread at a time. Now it is increasingly possible to break a project into parallel workstreams, let multiple agents tackle different parts, and then validate and merge the best work back together. The role shifts from shaping every input to evaluating outputs, defining boundaries, setting up validation, and deciding what should be automated versus what should remain tightly controlled. Anthropic has shown that higher-tenure users are more effective in their interactions, even after controlling for task. The signal is directionally clear: experience compounds.

The companies getting real value from generative AI are not the ones running isolated prompts. They are redesigning how work happens. A team that replaces a multi-day research cycle with a structured workflow learns something deeper than efficiency. They learn where the process itself needs to change.

That is where the return comes from.

If you wait for the category to stabilize before engaging, you risk waiting for the very experience required to use it well.

The advantage of starting now is not mastery of today's tools. That is a short-lived asset. The advantage is accelerating the accumulation of judgment.

Teams that engage early learn where trust is earned and where review is required. They learn which work should be accelerated, which should be restructured, and which should remain human-led. They learn how to evaluate outputs instead of admiring demos.

They also learn which layers persist: evals, workflow design, context management, integration patterns, governance. Not the glue. The structure underneath.

My bias is still to start now. Run small experiments. Measure them. Treat prompt craft as provisional. Treat evals, workflow design, and change management as the real assets.

The cost of early adoption is paying tuition on tactics. The return is understanding the shape of the work before the tools make it look easy.

This article reflects my personal perspectives on product management and AI. It does not represent the official position of my employer or any affiliated organization.